When creating a multi-deployment platform in Kubernetes, you will quickly realise how many LoadBalancer-type Services you deployed. This is usually not a problem with a self-built, self-hosted Cluster, as you manage how things gets created. The problem appears when you use managed Kubernetes solutions like Alibaba Cloud ACK, as it will create an SLB (Server Load Balancer) every time you expose a LoadBalancer-type Service for your Deployment. This is similar to the challenges we’ve discussed in our article on best practices for dealing with large Docker images in Alibaba Cloud Kubernetes.

In the beginning, Alibaba Cloud didn’t offer any alternative for exposing applications to the outside world in a clean way to just spinning up an SLB for each LoadBalancer-type Service, meaning you had an extra layer of complexity in both maintenance and points of failure. But now, this cloud provider offers another approach to route requests into your application. In this post we will focus on Alibaba Cloud ACK, but similar config can be applied to other managed Kubernetes alternatives out there. This approach aligns with Alibaba Cloud’s broader strategy of making cloud services more accessible, as we’ve seen with their DevOps tools and Container Registry solutions.

Wait, what is a Load Balancer?

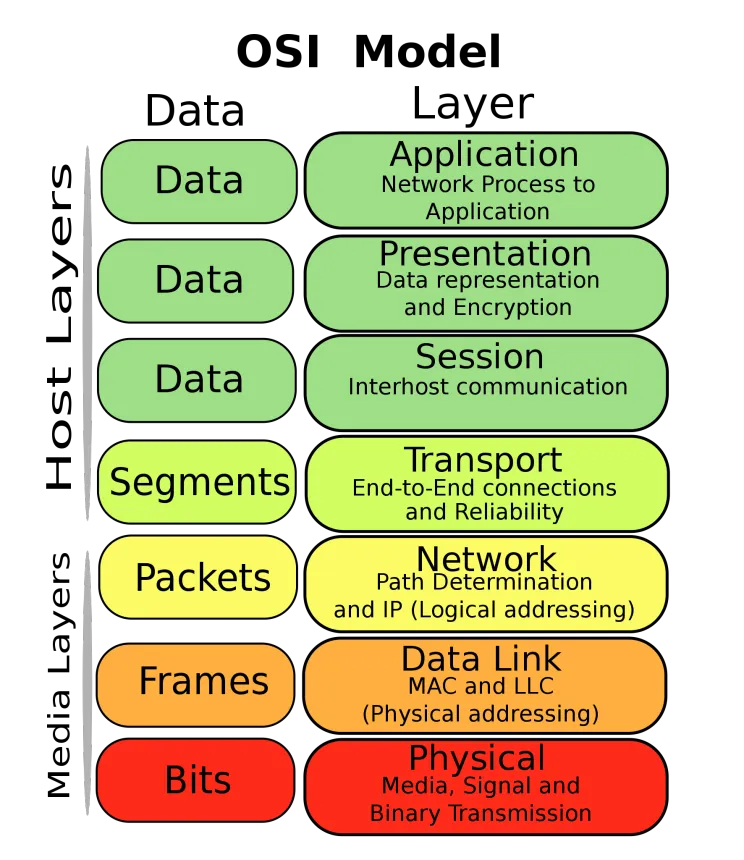

Ok, I could assume that if you landed on this article is because you are having troubles setting up load balancers using Kubernetes and you already know a bit about this. But is always good to go “back to basics” to refresh and remember why we are using those services in the first place. A load balancer is a device or service that distributes incoming traffic across a number of servers. This is used to increase capacity (concurrent users/connections) and reliability of applications. Load balancers are generally grouped into two categories depending on the OSI model they operate: SLB and ALB.

Server Load Balancer (SLB) vs Application Load Balancer (ALB)

SLB stands for “Server Load Balancer” and it works on the layer 4 of the OSI model. An ALB, instead, means “Application Load Balancer” and as you may guessed, it runs on the layer 7.

The basic differences between running on layer 4 or 7 are:

SLB on Layer 4: “Transport”

On this layer, a load balancer will limit its operations on efficiently distributing traffic to healthy servers on a pool/group. Clean and simple.

ALB on Layer 7: “Application”

On this layer, the load balancer works more like a reverse proxy and it can filter requests by host, path or headers. This means that, it can not only balance the load amongst a server pool/group, it could direct different requests to different server pools/groups depending on how the operations team wants. An example can be having multiple services like the “website”, an “API” or special campaigns launched at specific moments. This approach is particularly useful when working with serverless applications where you need flexible routing.

Application Load Balancers to the rescue!

As we mentioned, SLB and ALBs are both Load Balancers but with some differences. Alibaba Cloud provides now an ALB Controller on the Kubernetes admin panel to publish Ingress rules by using one single ALB. Although the price of a single ALB is higher of a SLB, it will reduce the complexity of your routing by a lot. Remember the term “virtual hosting”? This will sound familiar to you then.

SLB to ALB conversion

Our Deployment had several SLBs because we published several Services with the LoadBalancer type, the trick here will be to convert these Services into the default ClusterIP type. Then, you will need to add an Ingress resource (Which will be the one facing the outside world from now) and create some rules pointing to all the different Services of the application.

Summary

Remove all annotations from your LoadBalancer-type Services and change the type from LoadBalancer to ClusterIP.

Terraform config for 3 Deployments and 3 subdomains

The following example assumes you have 3 Deployments called “website”, “wordpress” and “campaign” running all on the port 80. This way, it will be very easy to just expose a new Deployment and just create the “Virtual Host” sub-domain for it. The ALB Controlled will update the ALB for you.

resource "kubernetes_ingress" "app-entrypoint" {

metadata {

name = "app-entrypoint"

annotations = {

"kubernetes.io/ingress.class" = "alb"

"alb.ingress.kubernetes.io/name" = "app-entrypoint"

"alb.ingress.kubernetes.io/address-type" = "internet"

"alb.ingress.kubernetes.io/vswitch-ids" = "vsw-uf6xxxxxxxxxxxxxxxxx,vsw-uf6xxxxxxxxxxxxxxxxx"

"alb.ingress.kubernetes.io/healthcheck-enabled" = "false"

"alb.ingress.kubernetes.io/healthcheck-path" = "/"

"alb.ingress.kubernetes.io/healthcheck-protocol" = "HTTP"

"alb.ingress.kubernetes.io/healthcheck-method" = "HEAD"

"alb.ingress.kubernetes.io/healthcheck-httpcode" = "http_2xx"

"alb.ingress.kubernetes.io/healthcheck-timeout-seconds" = "5"

"alb.ingress.kubernetes.io/healthcheck-interval-seconds" = "2"

"alb.ingress.kubernetes.io/healthy-threshold-count" = "3"

"alb.ingress.kubernetes.io/unhealthy-threshold-count" = "3"

}

}

spec {

rule {

host = "www.example.org"

http {

path {

backend {

service_name = "website"

service_port = 80

}

}

}

}

rule {

host = "cms.example.org"

http {

path {

backend {

service_name = "wordpress"

service_port = 80

}

}

}

}

rule {

host = "campaign.example.org"

http {

path {

backend {

service_name = "campaign"

service_port = 80

}

}

}

}

}

}

resource "kubernetes_service" "website" {

metadata {

name = "website"

}

spec {

port {

name = "http"

port = 80

target_port = 80

}

selector = "website"

}

}

resource "kubernetes_service" "wordpress" {

metadata {

name = "wordpress"

}

spec {

port {

name = "http"

port = 80

target_port = 80

}

selector = "wordpress"

}

}

resource "kubernetes_service" "campaign" {

metadata {

name = "campaign"

}

spec {

port {

name = "http"

port = 80

target_port = 80

}

selector = "campaign"

}

}

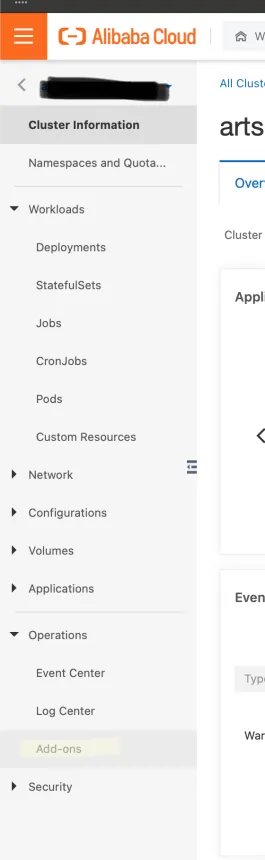

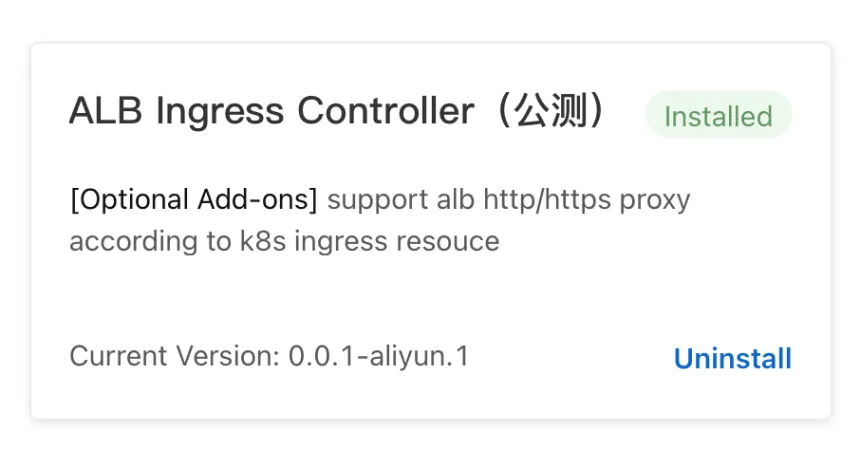

Special notes for the ALB Controller

Sometimes, a Kubernetes Cluster won’t have the ALB Controller installed by default. For this, once in your cluster, go down the left-hand menu to “Add-ons” like the picture below:

Once in the Add-ons screen, be sure the ALB Controller shows as “Installed”.

Conclusion

Again, although the price of a single ALB is higher of a SLB, it will quickly be a more scalable solution as your application grows. This way, you can launch new Deployments without worrying on the amount of SLBs sitting there. This is specially useful too with small platforms, where many times there is only one Pod running in a Deployment, as the traffic needs are not so high.

This approach to Kubernetes networking represents Alibaba Cloud’s commitment to making cloud services more accessible and efficient. Similar to how they’ve made other services more approachable through their Academy and certification programs, the ALB Controller simplifies the complexity of managing multiple load balancers in a Kubernetes environment.

For more information about Alibaba Cloud’s Kubernetes offerings, check out our article on testing the Alibaba Cloud Elastic Desktop Service, which also leverages Alibaba Cloud’s infrastructure capabilities.