OSS, the Alibaba Cloud Object Storage Service, can be an amazing tool to save money hosting static websites and, at the same time, speeding up a site by avoiding unnecessary database queries and server-side processing. OSS, compared to other object storage solutions like AWS S3, comes packed with some very useful features. As I already wrote about many of features of OSS, in this article I’ll focus on how to speed up deployments of large static sites like the ones built with Jekyll or Hugo.

So What Is This All About?

When deploying a static site to OSS, we can use tools like ossutil to copy all

the site assets to a bucket by running something like:

ossutil cp --recursive --force --endpoint=oss-accelerate.aliyuncs.com directory oss://bucket-name/

Running this is usually fine, but when your website becomes more complex (Like my blog, this very website), the amount of files and folders can be as many as 3000~5000 tiny HTML files and countless folders.

Uploading that amount of files, even if the total size is not higher than a few MB, can take several minutes. This happens because the OSS API needs to verify file by file when you upload them.

What Is The Solution?

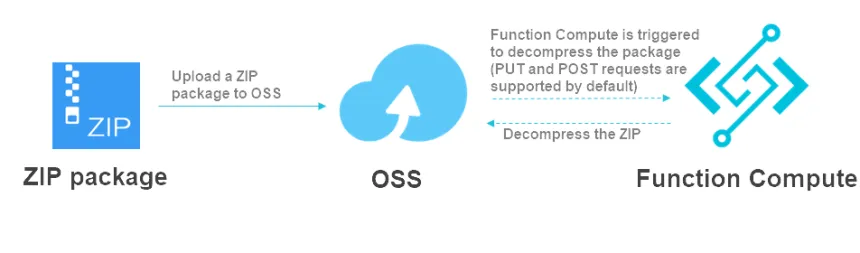

As mentioned before, OSS comes packed with many features out of the box. One of those features is the possibility of uploading a single ZIP file and letting OSS manage the unzipping for you. When you zip a folder, you create a single, compressed file containing all the files and folders in said folder. This is definitely much faster to upload than uploading each file individually, especially if you have a slow or unreliable internet connection.

Configuring OSS To Unzip Your Uploads For You

For this, OSS will configure a Function Compute Service completely for you, so you don’t need to know anything about coding and setting up a serverless function.

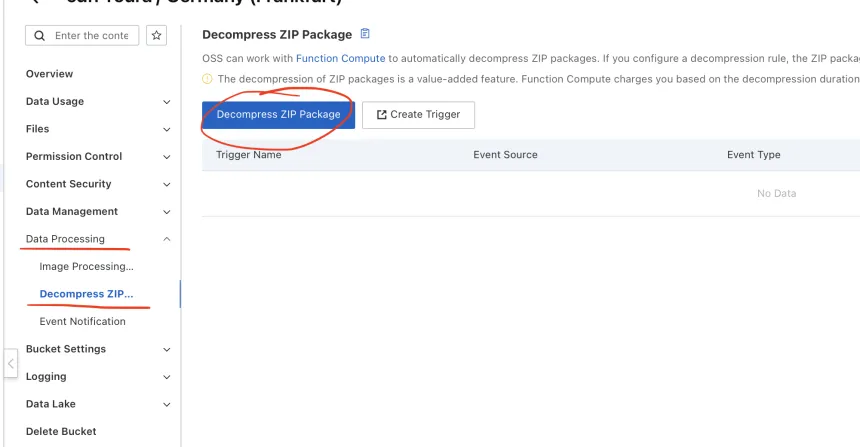

First of all, go to your OSS console and navigate to the bucket where you want to activate this feature.

Then, once there, use the left-side menu to go to “Data Processing > Decompress ZIP Package” and click “Decompress ZIP Package” as shown in the screenshot below:

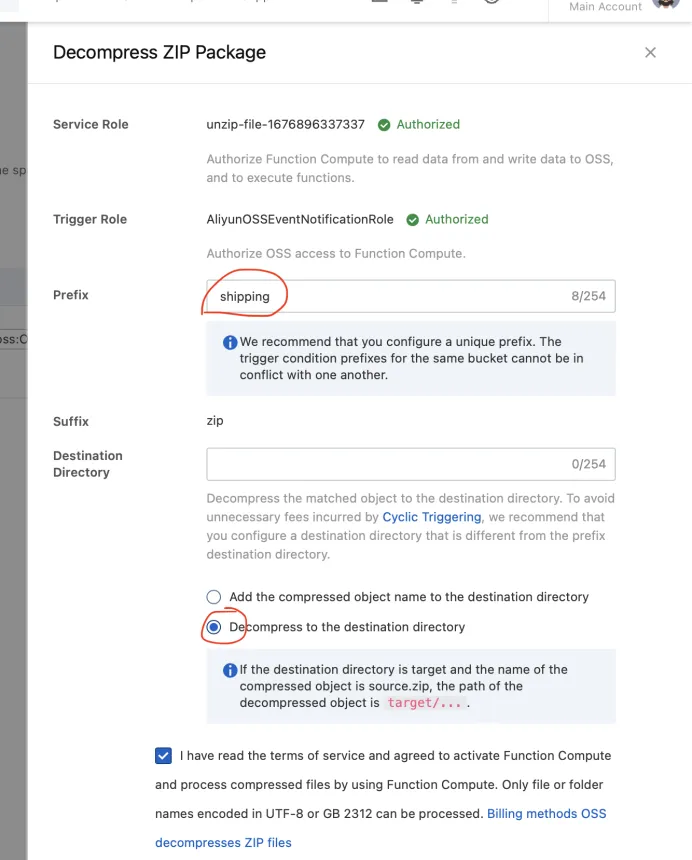

If this is the first time you do this with that bucket, you will be prompted to “Authorize” Function Compute to read data from and write data to OSS, and to execute functions.

After this, you are good to go. This step created a Function Compute Service, a Python function and a trigger where,

if you upload a zip file to the bucket called shipping.zip, this will get unzipped almost instantly for you in the

root directory.

So now, instead of just uploading all the files directly like before, you could update your deployment script to:

cd directory && zip -q -r ../shipping.zip ./* && cd ../

ossutil cp --force --endpoint=oss-accelerate.aliyuncs.com shipping.zip oss://bucket-name/

My Personal Experience

In my case, by deploying albertoroura.com using zip uploads, this feature reduced my deployment time from 7 minutes to less than 20 seconds, as my blog is composed of around 3000 HTML files for all pages.

Need help with DevOps/SRE things?

Specializing in Cloud Computing, I can provide cloud solutions and DevOps/SRE expertise. Having extensive

AWS experience and also focusing on Chinese & APAC cloud providers I can help you architect single and

multicloud platforms including also Tencent Cloud, Alibaba Cloud, Baidu Cloud and Huawei Cloud.

Also, if you need help with ICP License Filing, cross-border communications or

Site Acceleration in China, I will help you architect the most suitable solution for your business

needs. Check the services I offer on Cloud Consulting.

You can also check out my calendar if you want to have a chat.